Running Umbraco on .net core on a Free Oracle Cloud Linux VM

You generally hear most people talking about using app services or serverless architecture to host websites these days as they’re seen as the ‘cooler’ in thing, with far fewer people still talking about more traditional fullscale servers. Always one for bucking trends though, I still retain much love for the good old traditional server even if these days it is usually virtualised on top of cloud platforms rather than running on dedicated hardware. With a VM it’s nice to be fully in control and have the freedom to set up multiple different services or perform tasks outside the norm all in the same place, in addition to the regular ‘host a basic website’ requirement. It also gives so much more flexibility to just play around and experiment, learning things about software and server admin (and how to inevitably break and re-fix them!) that you just can’t do with the limited provisioned hosting environments. However full machines also have a tendency to come at quite a cost to be useful, particularly if they need to involve Windows too, so when you’re trying to use this sort of approach for personal projects without access to a bottomless credit card you do have to be a bit more creative. Most of the big cloud providers proclaim to have a free tier but full VMs, if available at all, are usually limited to very low spec stuff for timeboxed periods on Linux only.

Earlier in the year I stumbled upon the free offering from Oracle Cloud. Oracle are better known in the enterprise database space, and don’t have the market share of the established players like Amazon’s AWS or Microsoft Azure when it comes to cloud hosting. But as a result it also means they’re playing catchup so have some surprisingly generous free offerings to try and get people bought in. Though you do have to remember it is still Oracle so they could bait and switch or kill the account at any time so you probably shouldn’t be running anything business critical on these without a backup plan. On their free tier it was possible to get 2 single core x64 virtual machines with 1gb of RAM. Nothing too special there! However the more interesting offering was that a quad core VM with up to 24GB of RAM and 200GB of disk space could also be provisioned for free, subject to the caveat that it’s using Ampere compute processors. This is an ARM processor architecture and thus Linux only (as Microsoft still haven’t publicly released Windows Server for ARM). However unlike Windows, the different architecture isn’t a big showstopper for a large number of applications. Most Linux software either has already been or can be recompiled for the ARM processor architecture and will then run much the same as on x86/x64. Unfortunately ARM based processors have tended to look a bit weak on performance in the past, largely being skewed by the likes of the Raspberry Pi which although amazingly popular, has historically not got a particularly powerful ARM processor on the board as pure grunt power is not their main goal (that’s touching on a blog post for another day though). I decided to give the ARM-based cloud server a whirl though intending originally just to use it for some lighter things like a VPN tunnel for providing a free static IP I can route to when on ISPs with dynamic IPs or using CGNAT, which is common on mobile networks or newer fibre altnets and means you share a public IP with multiple users at the same time. However once the speed benchmarks started coming in it became clear quite quickly just how much power was available to the VM on this platform, so it’s since been expanded and put to use for much heavier tasks like media encoding on the fly which it can sail through with ease.

This got me thinking though. Now that I had this server already running, could I host my Umbraco sites on this too? Historically they’ve always been hosted on a low-power Windows server I keep inhouse as the older .net Framework could only run on Windows-based machines. Although this inhouse server has a good speed to it it’s deliberately not a fast machine as the focus was always on keeping energy consumption low and greener. However Umbraco’s move to the cross platform .net core from Umbraco 9, with subsequent support for SQLite removing the need for Microsoft SQL Server as a database too has opened up Linux as a much more viable platform too. Albeit not something immediately possible on most Linux distributions, so some work was going to be required to get this going, hence this little blogpost about getting Umbraco going on an Oracle Cloud Linux ARM VM, though the same concepts could be applied to most Linux server machines.

The Oracle Cloud Ampere VMs come with Ubuntu 22.04 available out of the box, and Canonical have done a good job making their software repo for their Ubuntu ARM distro quite fully fledged. Meaning setting up the machine for supporting the more commonly associated with Linux hosting elements like Apache, NGINX and PHP is quite straightforward. As this is something readily covered online so quite well documented, or will have already been done earlier on a server like me, thia isn’t something I’m going to focus too much on here. If anyone is starting with a completely blank new VM though and curious, such services can be easily added in via apt too, or by installing automated virtual hosting panel installers such as Virtualmin or Plesk which will usually come with them. As asp.net core comes with it’s own internal Kestrel server, for the sakes of trying this experiment out it is possible to serve the website pages entirely out of the built-in .net server instead without Apache/NGINX (though this should not be done for production use). However .net core itself is rarely present on Linux in even provisioned hosting environments, so is something that has to be added in yourself. This can be done via downloads put out by Microsoft themselves, however unless you’re looking for the bleeding edge stuff most of the big distributions now also include it in their own package repos natively. As I was looking to use the slightly older .net Core 6 for this testing, what with that being the last long term support release, this meant a simple:-

sudo apt install aspnetcore-runtime-6.0

Was enough to get the required runtime from Ubuntu’s own package repo. Once that was in place the dotnet command was then immediately available on the Linux terminal in much the same way as it would be on Windows. Would this be enough to run Umbraco or not though? This is where I made my usual mistake of trying to run before walking. As the main personal project I already had to hand is quite a big Umbraco site (>50000 nodes) I decided to just publish this out from Visual Studio, chuck it onto the server and see what would happen. My expectation would be that it wouldn’t work as there’d be no database behind it, but that I would at least get an error screen telling me this so I’d know .net core was executing the code as it should. However upon running it via the usual ‘dotnet name.dll’ command I got… well… nothing. It would start running in the terminal, but without any of the common ‘info’ outputs instead just sitting there waiting. No site would be served on the standard internal localhost:5001 url with only a browser timeout instead, and CTRL-C would not even then end the task back in the terminal, instead requiring starting a separate session and issuing a ‘kill -9 xxx’ command to terminate dotnet. It’s at this point I decided to do what I really should have done from the start and simplify everything right back to a basic hello world project from Visual Studio. So a fresh ASP.NET Core Web App project was created, with no database dependencies, no additional nuget packages, and no code changes made over what ships with the template. After publishing this and uploading it, this project ran fine with the dotnet command both on the default Kestrel localhost:5001 port (ie ‘dotnet name.dll’) and also when specifying a custom binding via the command line (ie the ‘dotnet name.dll –urls=http://x.x.x.x:port’ format, though normally this should be specified in config or code instead). As well as now returning a browsable site (after opening up the relevant firewall/ingress ports in Oracle Cloud too), upon issuing the dotnet command, this time the framework immediately started outputting the usual green ‘info’ messages and could also be terminated immediately with ctrl-c. This ultimately all proved the core framework was up and running, and the earlier failures had to be specific to my Umbraco instance rather than any wider .net problem.

To try and track this down, I republished and reuploaded a fresh copy of this Umbraco project. The issue persisted, however upon checking folders on the filesystem it was possible to see files were being written. Most importantly one of these included a fresh Umbraco log file in the Logs folder, which is always a goldmine for finding issues! I pulled down this file and found…. well… Basically 5 lines.

Acquiring MainDom

Acquired MainDom

Stopping ({SignalSource})

Released (({SignalSource})

Application is shutting down….

Which as Umbraco logfiles go is very sparse, and didn’t really reveal a lot immediately. With some extra testing I was able to establish that the 3 stopping/released/shutting down messages were all triggered by the CTRL-C interrupt keyboard shortcut, even though no control was ever returned to the terminal afterwards, leaving only the first two messages as the ones pointing to anything. Still even just the two remaming ones were enough to suggest where the problem comes in. In Umbraco, MainDom is often read from the database to try and work out the main domain for the application. As this project had an existing database listed in its connection strings which I’d rightly not have expected it to find at this stage on this new server, I decided to try removing that connection from appsettings, effectively returning it to a fresh Umbraco instance state. After then killing and restarting the site on the terminal again, suddenly a response came through in the browser, the standard Umbraco installer screen appeared, and CTRL-C would also now correctly work again on the terminal. I’m sure we normally get some sort of error on a database failure when hosting on Windows, rather than just the odd ‘silent death connection timeout’ as happened here, but maybe that’s down to just some recent changes (this is an older version of Umbraco 10 still) or differences between how things work in Linux and Windows. The key thing was it had narrowed down the problem as a database issue rather than anything with the .net core application itself. So it was time to move on to getting the database running too!

Normally with Umbraco on Linux, your options are to either install MS SQL for Linux, or to use SQLite instead. Unfortunately Linux Microsoft SQL still only supports x64 architecture and not ARM, so installing the full database on the ARM architecture was not an easy option, or as far as I could find anyway. This may change in the future if the ARM version of Windows Server ever officially appears, or it may not as Microsoft seem keener to push everyone towards using their new Azure SQL Edge product now instead which does have ARM support. This left me with Umbraco’s alternative SQLite database option instead. Given the sheer size of the content/media tree on my test instance, I decided as a first option to just try a conversion from the Microsoft SQL database to an SQLite database using some external software (available at this GitHub Repo if you’re interested, though don’t rush to try it until you read on further). After a minute or two of converting I had a nice fresh .db file to try with.

On the running instance of Umbraco I first went through a full fresh install solely to create a fresh working .db file and auto-configure appsettings to use it. This all worked and got me into a nicely working empty but empty back office for Umbraco. As soon as this was done though, I stopped the application, replaced the DB file in the default /Umbraco/Data location with the generated version above, and then started it up again to see what would happen. The front end of the application still responded rather than timing out as it had done in the earlier times there were database issues, but would only return a white screen. The back office similarly only returned an empty white screen. So following the usual debugging procedure once more, I brought down the Umbraco logfile again. Unlike the sparsely populated file earlier, this was now looking considerably more fleshed out as Umbraco had actually been able to fully load a few times while doing the fresh installs. In the most recent runs after switching over the database file, quite a few spurious errors around guid formats now showed (specifically saying ‘guid should contain 32 digits with 4 dashes’). The only references I could find to these all seemed to be for very old Umbraco installations in the Umbraco 4-7 era where people were using incompatible plugins, so it seemed quite likely this just indicates that the direct database conversion approach just won’t work. Of course it was highly unlikely it would be that simple, but you can’t blame me for trying! Thankfully we have other battle tested tools available in the community instead, and thus it was back to the old trusty method of uSyncing ‘All Of The Things’ out of the old Microsoft SQL database on Windows and importing them back into the fresh new one on SQLite on Linux. Unfortunately this did mean turning back on the uSync MediaHandler too on my project, which I normally have disabled as this site has over 50000 nodes in due to the size of the media library, but needs must!

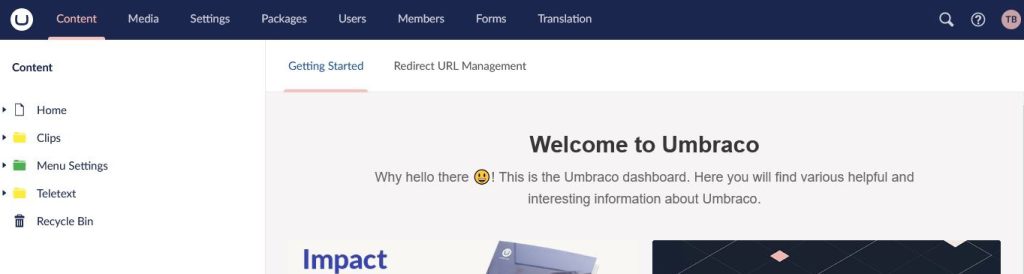

After a very long export, upload, then import (and by long I don’t mean ‘go away and make a cup of coffee’ long, I mean ‘go away and make dinner, but intermittently pop back to reload another tab in admin to keep it logged in’ long), I fired up the back office again. This time round, it remained fully functional, and finally was now complete with with a full content and media library. The last test was running the frontend again. Alas this still returned the same blank screen. Down came the logfile again, which quickly revealed this was largely down to my own code though. I’m still on an older version of Umbraco 10 which pre-dates the recent official addition of Entity Framework Core support, so at the time I’d added this in myself for some custom database table integration. It seems however the connection strings used by SQLite are not fully supported by the regular integration EF Core uses for MS SQL full edition. This is definitely a me-problem though and would require some reworking my custom code to fix. So for the sakes of testing here I simply removed all the calls to the custom code leaving just regular Umbraco templating code in place. At which point, everything ran successfully, and I had a fully browsable site once more. All running off Linux on ARM on Oracle Cloud.

So as a proof of concept this works. Whether or not I’ll move over to using this full time for hosting will depend on what happens later this year, as it would require some more steps with this particular project:-

- Add in reverse proxying – In all of this testing, I’ve only used the default built in Kestrel server in asp.net core, and just opened up a port to this publicly in the firewall. This is not a recommended approach for production use however. For production, a more fully fledged webserver such as Apache or NGINX should be exposed on the public side, and then this can then be set up to reverse proxy requests down to Kestrel which is only exposed internally.

- Update custom database code – As I don’t really want to install .net 7 on here and do a lot of work to fix my custom code when 7 is only in short term support. I will wait a few more months and look at putting a version onto .net 8 once this is released. As the newer versions of Umbraco also come with better native EF core support, upgrading the version of Umbraco to the latest then rewriting my own custom calls for this then would make more sense. Which is good as due to not really using any features from 11 or 12, I’d been scrabbling for a good reason to actually upgrade this project to the newest version!

- Replace Windows-native code – This project calls FFMpeg Windows executables and and some powershell scripting tools directly for work with video files. As these are currently doing so in a very Windows-dependent way, this code would have to be refactored with a Linux-native equivalent (or ideally something fully cross platform).

- Check file case sensitivity across all the project files – Linux (well Unix generally) is case sensitive, whereas Windows isn’t, so the odd filename which would have been ignored on Windows will suddenly not be found on Linux. I had to rename a partial at one point due to case-sensitivity issues.

- Check Output Caching – None of my partials seemed to be caching on the Linux run, which I can spot very quickly as I have a cached ‘random pick’ panel sat in the website footer that only refreshes its ‘random’ choice once a minute to cut down on calls. This may just be an environmental issue due to something thinking it was running in development rather than production, but I didn’t delve too deep into that here.

- Perform more speed testing – Particularly I’m keen to find out the speed impacts of using SQLite versus MS SQL. If doing mostly reads, as is usually the case for public visitors to an Umbraco site, SQLite is supposed to be very quick and would probably not cause any real degradation over the previous Windows use of SQL Express, especially when taking into account the much faster overall processor on this VM and the fact Umbraco doesn’t even call the database too often anyway with its nucache layer. However some of my custom code for this project which was commented out for the sakes of this also does its own background writes to custom DB tables too, so there could be more performance implication here.

Indeed performance is one important thing I’ve not touched on in too much depth here, which may seem surprising given how much I opened on the speed of the VM, but this is for a reason. Everything appeared to run fast from a ‘quick eye’ test. However due to the aforementioned lack of OutputCaching on partials and the chunks of removed custom code it’s probably unfair to compare this to my existing Windows version. However I am planning a follow up blog post specifically on Umbraco performance in the near future which will look at and compare this with some slightly more consistent and controlled environments. And yes as I alluded to earlier, everyone’s favourite Raspberry Pi will also be making a return there too!

One thought on “Running Umbraco on .net core on a Free Oracle Cloud Linux VM”

Comments are closed.